Code and Coordinates

engineering at Geocodio

How we upgraded 200+ servers to Debian 13 without downtime

How a fully automated Ansible + Terraform pipeline let us dist-upgrade 200+ production servers to Debian 13 in three days, without a single second of customer downtime.

When I joined Geocodio a few months ago, one of the first things that surprised me was the scale of the infrastructure. From the outside, Geocodio looks like a focused, lean product company. And it is. But under the hood? Over 200 servers running in production.

This is largely due to Geocodio's unique Unlimited plan, which gives customers a dedicated instance exclusively for their own use. This means we have many more servers running than you might find at a typical SaaS company of our size. It surprised me, as it's inefficient from an infrastructure point of view, but customers love the idea of having dedicated resources. Still, it does create some unique infrastructure challenges for us.

And every single one of those 200+ machines needed to be upgraded to Debian 13.

The landscape

Geocodio's infrastructure is more than just "a bunch of API servers." There are load balancers, API nodes, Database clusters, Redis instances, ClickHouse nodes, Kafka brokers, ETL workers, monitoring systems, and more. Some are dedicated bare-metal servers, others run on AWS where our Enterprise platform is SOC 2 and HIPAA compliant.

Each server has a role, and most of them work in pairs or clusters with failover. The API sits behind load balancers, databases are replicated, Redis runs in sentinel mode, and so on. That architecture is the reason this story has a happy ending.

Why now?

When I came on board, the fleet was running a mix of Debian versions. Some servers were on Debian 11, most on Debian 12. Nothing was critically out of date, but it wasn't consistent, either.

Getting everything onto Debian 13 (Trixie) was about establishing a clean baseline. When every server runs the same OS version with the same package versions, debugging becomes simpler, security patching becomes predictable, and future upgrades become less scary. It's the kind of unglamorous work that pays dividends for years.

There's also a practical angle: if you let OS upgrades pile up, you eventually end up with a much harder migration. Staying current means each jump is smaller and more manageable.

Planning the upgrade

Before touching a single server, we needed to define our goals and constraints. The non-negotiable requirement was zero customer downtime. Geocodio's API serves time-sensitive production workloads for thousands of customers. A maintenance window with downtime simply wasn't an option.

Beyond that, we wanted to complete the upgrade during normal working hours within a single work week. No midnight maintenance windows, no weekend shifts. If the architecture couldn't support that, we'd need to rethink the architecture, not the schedule.

With those constraints, a few risks stood out. Stateful services like MariaDB and Redis needed careful sequencing, as you can't just restart a database mid-write. We also expected package-level surprises: old GPG keys, stale apt sources, configuration files that had drifted between Debian versions. And with 200+ servers, there was a near certainty that at least a few would behave unpredictably during the reboot.

The mitigation strategy came down to the redundancy already baked into the architecture. Every critical service runs with failover, so we could upgrade one node at a time without exposing customers to risk. And by codifying the entire process in Ansible, we could fix issues once and apply the fix fleet-wide rather than debugging each server individually.

The approach

We manage our infrastructure with Ansible and Terraform. Ansible handles configuration and package management while Terraform handles provisioning.

The upgrade playbook followed a simple pattern:

Drain the server from the load balancer (or mark it as a standby in the cluster)

Upgrade the OS with a dist-upgrade

Verify that services come back healthy

Rejoin the server to the pool

We rolled this out roughly five servers at a time. Each batch was a group of servers with the same role, so we always had healthy capacity serving traffic while the upgrade ran.

The key insight is that the drain-and-rejoin pattern means customers never notice. If you're hitting the Geocodio API during the upgrade, your request just goes to one of the other nodes. From your perspective, nothing happened.

Stateful services: the tricky part

Draining a stateless API server is straightforward: stop sending it traffic and upgrade. But servers that are actively being written to and read from, like MariaDB and Redis, need more care.

For MariaDB, we relied on replication. Each database cluster has a primary and one or more replicas. The process was: promote a replica to primary, upgrade the old primary (now idle), let it rejoin the cluster as a replica and catch up, then repeat. And at no point was the database unavailable. Writes just shifted to a different node.

Redis was similar. With Sentinel managing automatic failover, we could take down one Redis instance at a time. Sentinel would promote another node, clients would reconnect through the Sentinel-managed endpoint, and the upgraded node would rejoin and resync. The brief failover window is measured in seconds, and clients handle reconnection transparently.

The general principle is the same as the stateless servers—always keep enough healthy capacity running—but the sequencing matters a lot more when data consistency is involved. You can't just drain five database servers at once the way you can with API nodes.

The hiccups

Of course, it wasn't completely smooth. Two things tripped us up.

Stale apt sources and GPG keys. This was one we anticipated but underestimated. We knew some of the older servers had drifted, but the scope was wider than expected. Several servers still had apt source lists pointing at archived Debian repositories, and their GPG keys had expired. The dist-upgrade would fail partway through because apt couldn't verify package signatures.

The fix was straightforward: we added a set of pre-tasks to the Ansible playbook that cleaned up old source files and imported fresh GPG keys before the upgrade started. Once we had that in place, it ran cleanly across the rest of the fleet. This is exactly the kind of thing that makes codifying the process in Ansible worthwhile — fix it once, apply it everywhere.

A handful of servers that didn't come back after reboot. Out of 200+ servers, a few just... didn't come back up after the reboot step. The GRUB bootloader configuration had gotten into a weird state during the dist-upgrade.

These required manual intervention. SSH in (or in a couple of cases, use the out-of-band console), fix the boot configuration, reboot again. Not ideal, but because every service had redundancy, there was still no customer impact while we sorted it out. The healthy nodes kept serving traffic the entire time.

The result

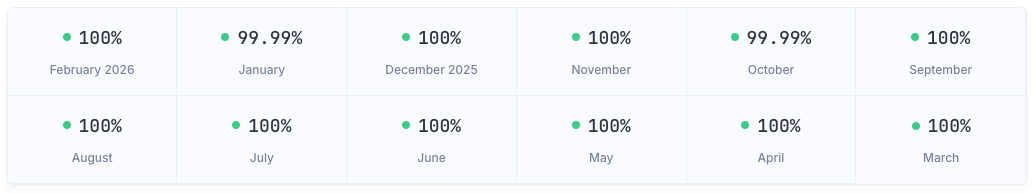

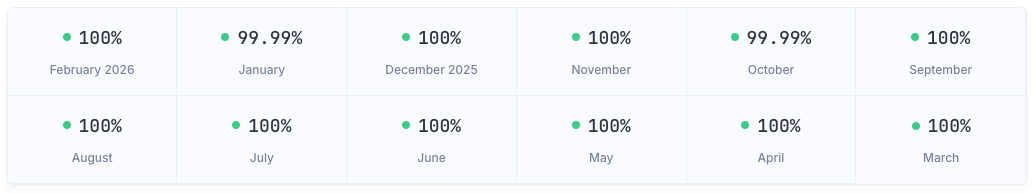

Three normal workdays. 200+ servers. Zero customer downtime.

Our status page only shows two decimal places. And the months showing 99.99% are closer to 99.9999% in practice. We're talking seconds, not minutes, and none of them related to the OS upgrades.

We went from a mixed fleet of Debian 11 and 12 to a uniform Debian 13 across the board. Every server running the same OS, with the same package versions, and the same security patches.

It's the kind of result that sounds boring when you say it out loud. "We upgraded our operating system and nothing broke." But that's exactly the point. The best infrastructure work is the kind nobody notices.

Takeaways

High availability pays for itself during maintenance, not just during failures. We built HA and failover into the infrastructure because of the uptime guarantees we make to customers, and also for the quality of life it gives us as engineers. High availability and failover means fewer midnight downtime pages and, relevant to this project, maintenance without service windows. This means our customers don't have to plan around "scheduled downtime" windows and our engineers don't need to be five coffees deep at 2 am during a late-night upgrade window. Just drain, upgrade, rejoin.

Automation is the only way to do this at scale. Manually upgrading 200+ servers isn't just tedious, it's error-prone. Having the process codified in Ansible meant we could fix the apt source issue once and have it applied consistently everywhere. It also means the next upgrade will be even smoother, because the playbook is already written and battle-tested.

Consistency makes everything easier going forward. Now that we're on a uniform Debian 13 fleet, future work (whether that's kernel upgrades, security patches, or tooling changes) becomes predictable. We know exactly what's running on every machine, and we can test changes against a single target instead of a matrix of OS versions.

Start early, upgrade often. The longer you wait between OS upgrades, the harder each one gets. Jumping from Debian 11 to 13 was more work than Debian 12 to 13. Staying reasonably current means each step is incremental rather than a big bang migration.

What's next

With that foundation in place, we can focus on the more interesting work: optimizing the infrastructure, improving our deployment pipeline, and continuing to build out the platform. The Debian upgrade was a foundation, not an end goal.

If you're sitting on a fleet of servers running mixed OS versions and dreading the upgrade, my advice is simple: invest in the automation and the HA architecture first. Once those are in place, the upgrade itself becomes almost boring.

And boring infrastructure is the best kind.

Related

The $1,000 AWS Mistake

A cautionary tale about AWS VPC networking, NAT Gateways, and how a missing VPC Endpoint turned our S3 data transfers into an expensive lesson.

From Millions to Billions

How we solved request logging at scale by moving from MariaDB to ClickHouse, Kafka, and Vector.

How Geocodio keeps 300M addresses up to date

Working with address data requires continual updates. Our in-house ETL, built on Laravel and SQLite, helps us expand our address point data on a daily basis.

Get new posts in your inbox

We write about what we're working on, thinking about, and getting so excited playing around with that we accidentally stay up a bit too late.