How Geocodio ensures high availability

How we process billions of lookups every month and stay online

Geocodio processes over two billion lookups every month. Tens of thousands of customers rely on Geocodio to power their own apps, putting significant trust into the reliability and performance of our API and spreadsheet processing tool.

This requires a resilient and fault tolerant infrastructure that can handle anything that is thrown at it.

Infrastructure

Our internal infrastructure consists of various services such as loadbalancers, databases, cache servers, and logging handlers, among others. We use dedicated, physical hardware to ensure maximum performance and security.

The golden rule in our infrastructure design is redundancy: ensuring that there is at least two of everything. This ensures that even in the case of a catastrophic hardware or data center failure, there is always another dedicated unit to take over to ensure that there is no data loss or service disruption.

This rule is applied on several layers, from having several physical servers dedicated to the same service, to having redundant disks on all machines. This also means over-provisioning hardware, ensuring plenty of additional peak capacity when needed.

Our dedicated hardware is spread over 11 data centers in two geographically separate locations. Even in the complete loss of several data centers, Geocodio’s API will still be able to continue to function.

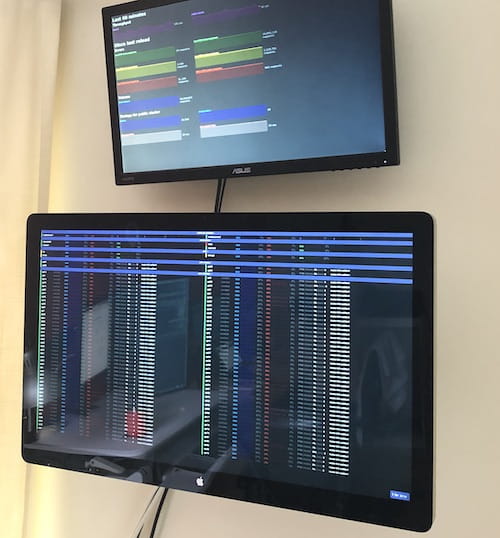

Alerting & Monitoring

We utilize several internal and external monitoring systems that allow us to get early warnings when error rates, response times or hardware health appears abnormal.

We publish a public status page that has a live view into our current system status. This is also where we post updates in case of any unexpected service disruptions.

Since critical failures seldom seem to occur during regular business hours, we are on-call 24/7/365. We will be alerted immediately, even at two in the morning, in case of any critical errors.

Software deployments

Geocodio has a culture of continuous delivery. We sometimes deploy changes several times per day.

This has a great benefit of reducing risk. It ensures that most changes are small and incremental. It also allows us to react quickly and take action promptly if an issue is discovered.

The flip side is that it requires a rigorous, automated process to ensure that we can deploy changes with confidence.

We frequently utilize the concept of blue-green deployments. This allows us to slowly roll out new changes to a small subsets of customers, while monitoring the health of the deployment. If any unexpected issues occur, we can abort a deployment with minimal nuisance to our customers.

Geocodio deploys changes with a zero-downtime process that guards against needing maintenance windows or service disruptions.

Testing and Q/A

Any change in the Geocodio software starts with our automated test suite. We have more than 1,000 individual tests. Each set of tests contains many tests within it. This means we are performing thousands of assertions every time code is deployed.

These tests cover everything from ensuring that the geocoder can handle addresses in tricky formats to ensuring that billing and usage tracking are being performed correctly.

Our test suites are ever-growing. Every improvement we make warrants additional tests being added. This ensures that if we fix a certain geocoding edge case, we can be certain that there are no regressions in the future. It also means that we can launch new features and make certain that they are working as expected.

Automated tests are great for catching potential issues before they potentially reach our customers, but we do not stop there. We also perform rigorous manual testing and Q/A as a supplement to our automated tests.

Performance testing

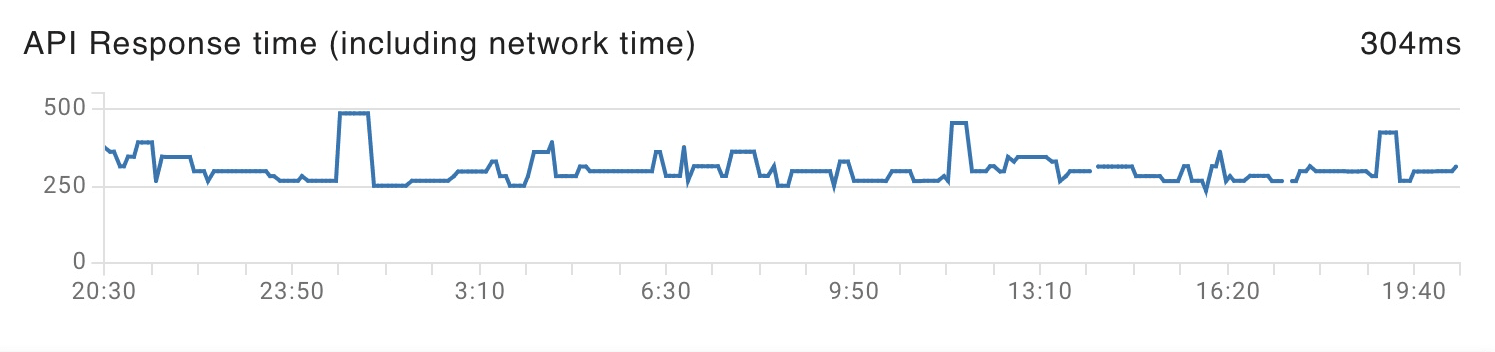

It is imperative that changes to our geocoding engine and API do not have negative performance implications. We do not want to release a change that suddenly increases response times or otherwise reduces the quality of service.

This can be a tricky problem to solve, as performance issues rarely affect all requests or are obvious with regular usage. Because of this, we tackle the problem from multiple angles. We have a separate automated test suite solely dedicated to testing that geocoding processing times stay consistent and do not exceed set thresholds.

For larger changes, we also have the ability to quickly stand up a mirror of our production environment and perform load tests that simulate high-volume traffic.

We continue monitoring for performance issues after a change has been deployed. We do this by monitoring processing and response times . We do not only look at average response times, but we also monitor percentiles, which often tell a more detailed story.

Self-healing

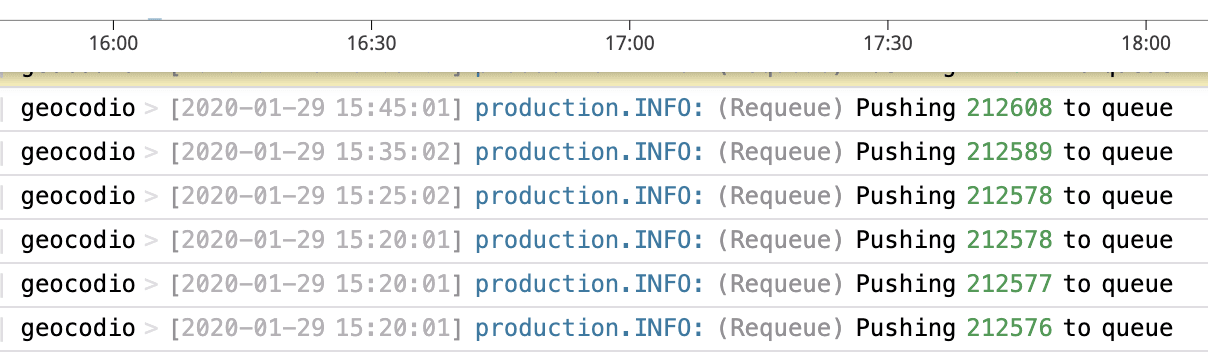

Everything from our server infrastructure to app code has been built with self-healing features and strategies in mind.

This means that unexpected issues can initially be resolved without human intervention. This saves valuable time and reduces the chances of downtime or service disruptions.

Self-healing strategies include automatic failover for internal services, advanced queue monitoring to ensure that spreadsheet jobs do not get stuck or left behind. It also includes the ability for the API to continue to function even when major internal services are unresponsive.

Risks

So with all of these things in place, Geocodio definitely can’t go down, right?

While we're working very hard to ensure that service disruptions are minimized as much as possible, it simply isn’t entirely avoidable. We generally operate at an average uptime of 99.99% which corresponds to a bit more than 4 minutes of unavailability per month on average.

We operate Geocodio with the philosophy of continuous improvement. We want to strive to be the best geocoding and data matching platform that you could wish for.

This means that the Geocodio platform is not in “maintenance” mode, and rather continues to receive improvements, new features and fixes. This is the biggest risk of all, as every change we make could have an undesired effect on our service availability.

Handling outages

Should an unlikely service outage or disruption happen, we will first triage the issue and then post on https://status.geocod.io as soon as we have confirmed it. Our status page is hosted completely separately from the rest of our infrastructure, ensuring it is available even if we were to suffer a major outage.

Outages are fortunately most often very short events, but we will make sure to keep you updated throughout. You can subscribe to status-only updates on our status page as well.

After an outage has been resolved, we perform a retrospective, where we outline the cause and effect. We take steps to ensure that a similar issue can never happen again in the future. This could for example be resolved by a process change, or additional automated checks to reduce the chance of human error.